Making Complexity Manageable

A multi-phase research program to make a highly configurable product easier for administrators to learn, trust, and manage.

End-to-End Research

My role: Senior UX Researcher. I led this program end to end — framing the problem space, choosing methods, running studies, synthesizing across threads, and helping shape product direction.

Company & product: D2L, Brightspace learning platform

Audience: Administrators across higher education, K–12, and corporate learning environments

Scope: Multi-phase research program

Methods: Product analytics, survey research, interviews, task analysis, workflow research, heuristic evaluation, concept evaluation, and usability testing

Overview

D2L launched an initiative to improve the administrator experience by reducing the learning curve, improving ease of use, and helping customers get value faster from a highly configurable product.

Brightspace administrators set up and maintain a large digital learning platform. Their work shapes who can access which tools and information, how the platform itself is structured, how third-party tools are added and maintained, and how old data is handled safely over time. Brightspace is powerful, but that flexibility also makes some administrator workflows difficult to learn, difficult to manage, and, in some cases, difficult to scale.

I led a connected program of research that started by identifying the most-used and most difficult administrator workflows, then followed those signals into deeper studies on platform structure, integrations, data management, and permissions. Over time, the work shifted the question from “How do we simplify this?” to “How do we make necessary complexity feel clearer, safer, and more manageable?”.

Starting with the administrator experience as a whole

Before going deep on individual workflows, I needed a clear picture of what administrators actually do: which tools they use most often, which responsibilities take the most time, and which parts of the experience feel most important and most difficult. That broader view mattered because it let me decide where to focus first based on evidence instead of internal assumptions.

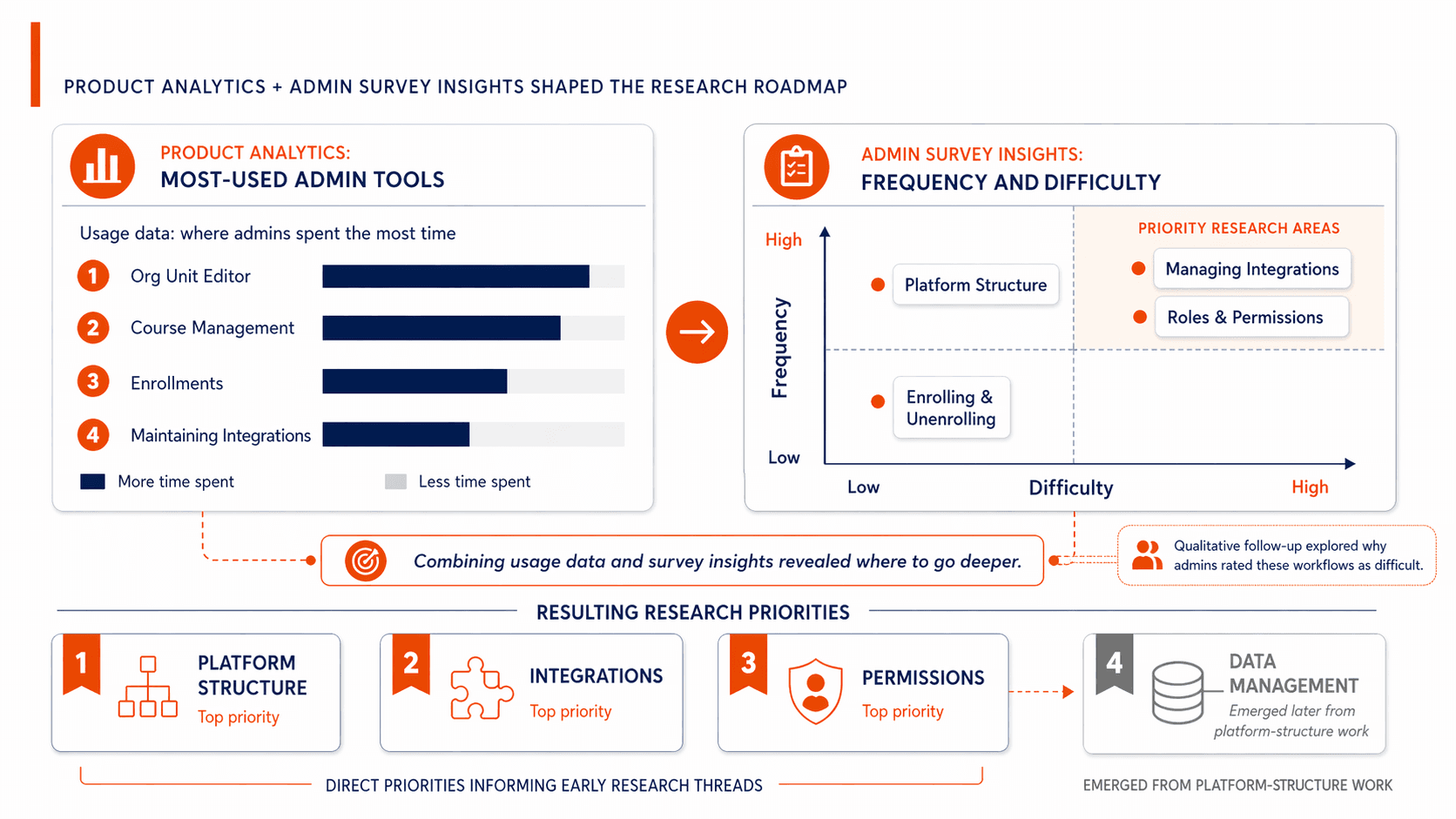

I started with two complementary inputs. First, I used product analytics in PowerBI to understand which administrative tools were most heavily used. Platform-structure tools like the org unit editor and course-management tools stood out immediately. At the same time, I led a broad administrator survey, then followed it with qualitative research so I could understand the “why” behind the patterns, not just the rankings. Together, those inputs showed that platform structure, integrations, and roles and permissions were all important opportunities for improvement — but in different ways. Platform-structure tools were among the most heavily used; integrations and permissions surfaced as especially difficult and important. The survey included 112 respondents, and the qualitative follow-up included 12 sessions with LMS administrators.

Research goal: Build a broad view of the administrator experience: what administrators are responsible for, which tools they use most often, and which workflows are both important and difficult.

Why this was the first step: Before deciding where to go deep, I needed evidence about where administrators spend time and where friction is most concentrated.

Methods: Product analytics, survey research, and qualitative follow-up interviews.

Outcome: This gave me a prioritization map of the admin experience: which tools were most used, which workflows felt most challenging, and where the product had the biggest opportunity to improve.

What it led to: Three major threads emerged: platform structure, integrations, and permissions. A fourth thread — data management — emerged later from the platform-structure work itself.

Following the work into the platform’s structure

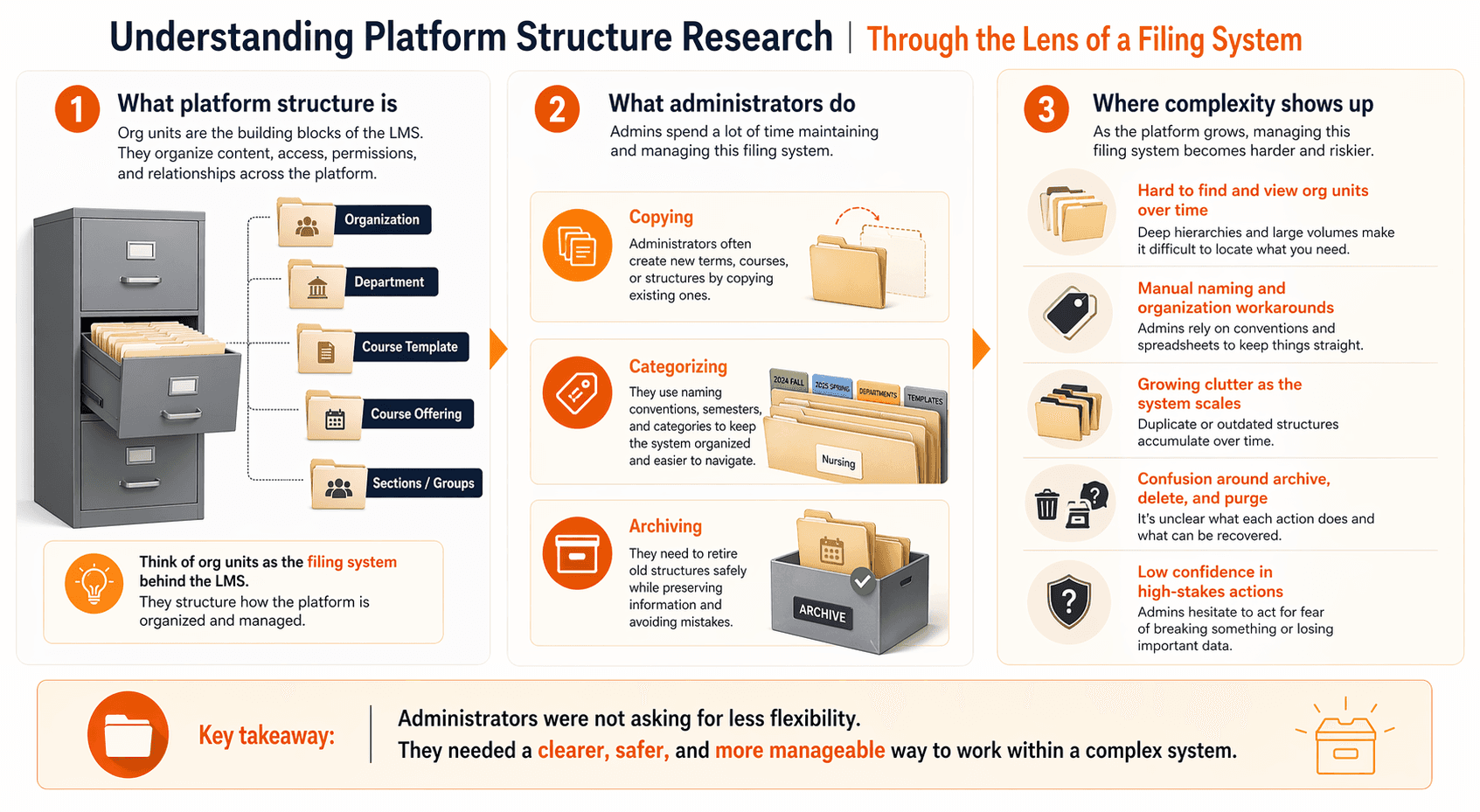

One of the first places I went deep was the platform’s underlying structure. In Brightspace, “org units” are not just about courses. They are the structural backbone of the platform, shaping how content, access, permissions, and relationships are organized and managed. Because so much of the administrator experience depends on that structure, problems here have ripple effects throughout the product.

To make that structure legible for stakeholders, I used the metaphor of a filing cabinet. Org units function like the folders and files that keep the whole system organized. Administrators are constantly creating, copying, categorizing, and archiving them. That metaphor made the problem much easier to see: the cabinet kept getting fuller, the folders were hard to find, and the archive process did not work the way people expected. In research with 8 Brightspace administrators, I found that administrators were spending significant effort creating and copying structural objects, using naming conventions and categorization workarounds to stay organized, and struggling to find and manage what they had created over time. The issue was not just clutter. Foundational parts of the system felt harder and riskier to manage than they needed to.

Research goal: Understand how administrators create, organize, copy, archive, and maintain the platform’s underlying structure over time.

Why this was the next step: These tools were heavily used, central to day-to-day administration, and foundational to the rest of the platform.

Methods: Semi-structured interviews, workflow walkthroughs, and concept evaluation.

Outcome: This work clarified that the problem was not just visual clutter. Administrators were relying on manual systems and workarounds to manage the structural backbone of the platform because the current experience was hard to navigate, hard to trust, and hard to maintain.

What it led to: The strongest recurring signal in this work was confusion around archive, delete, and cleanup behavior, which pointed to a broader data-management problem.

What changed next: This work helped move redesign efforts for the org unit editor and course-management tools onto the roadmap. I then led the research through multiple stages, from exploratory work to concept and usability testing, helping shape improvements including bulk management and tagging/categorization. The redesigned tools started releasing in 2025.

Reimagining the integrations experience

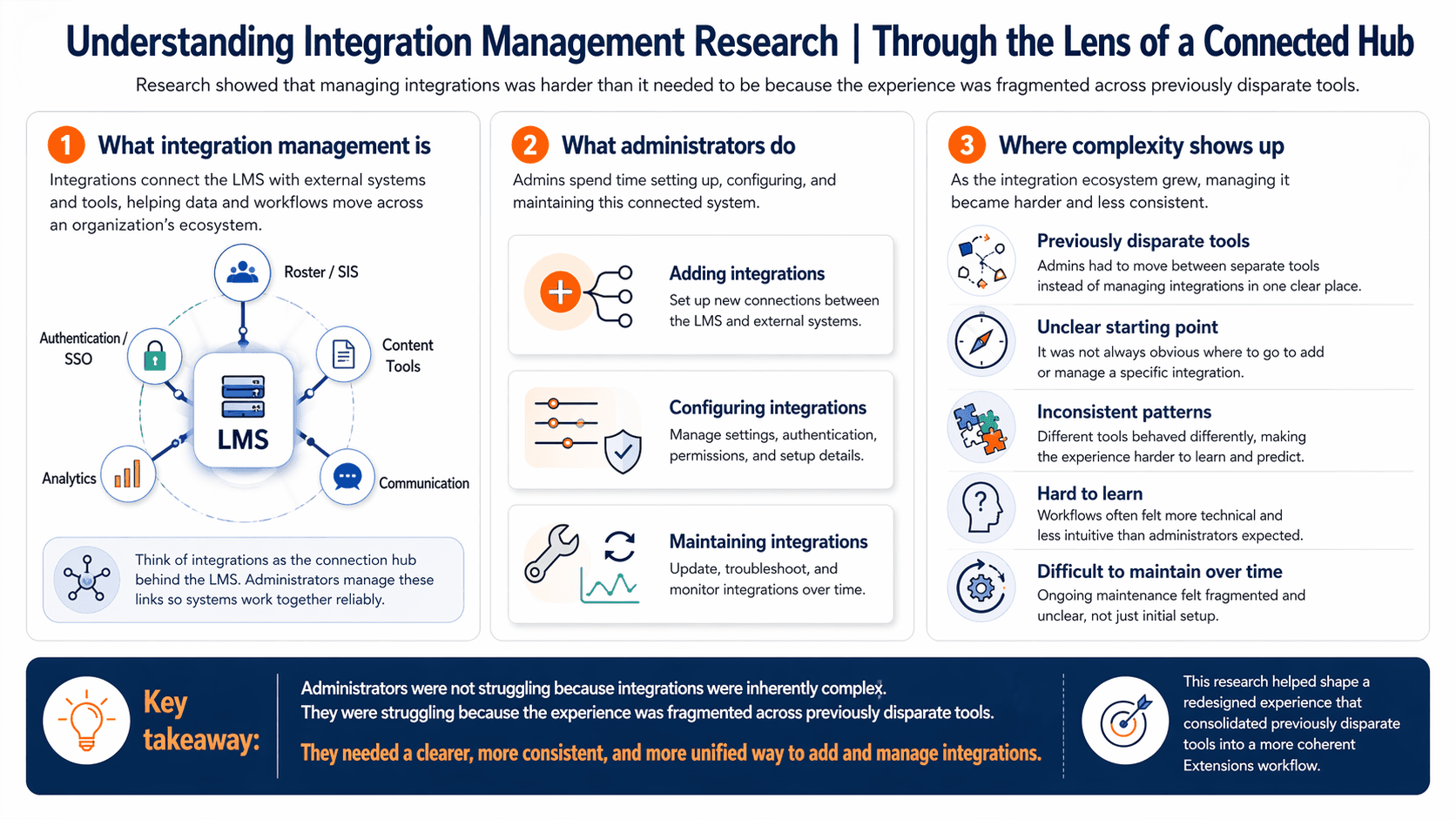

At the same time, a parallel thread was becoming impossible to ignore: integrations. In the broader admin research, integration work surfaced as both frequent and difficult. Administrators were setting up and managing third-party tools regularly, often independently, and describing the process as complex, anxiety-inducing, and costly to manage well. The foundational research made it clear that this was not a niche task — it was a recurring part of the administrator role and a strong candidate for strategic improvement.

I followed that signal with a dedicated exploratory study on integration setup and management. I ran 15 sessions with Brightspace administrators — focused on setup and management of integrations — to understand the workflow in detail, including who was involved, what information administrators needed, how they tracked usage, and where the process broke down. That work showed two major problems. First, setup often depended on incomplete or inconsistent vendor documentation, which forced administrators to chase information, guess at terms, and spend time managing vendor relationships just to get the basics in place. Second, the experience was fragmented across multiple disparate tools. There was no single place to set up, edit, remove, and understand integrations, and administrators lacked reliable visibility into what was in use. That fragmentation had real downstream cost for customers, including time spent managing tools manually and continued spend on integrations that were no longer needed.

Research goal: Understand the workflow, information needs, and pain points involved in setting up and managing third-party tool integrations.

Why this was the next step: Foundational admin research showed that integrations were both frequent and difficult, making them a high-value area for deeper investigation.

Methods: Survey synthesis, exploratory interviews, workflow mapping, and then successive evaluative studies.

Outcome: This work reframed integrations from a set of isolated frustrations into a larger systems problem. It helped the team align around a more unified integrations experience and contributed to a redesign that consolidated previously disparate tools into a cleaner, more consistent, easier-to-use experience.

What it led to: It became one of the clearest examples in the program of how a fragmented, high-stakes workflow could be rethought at the system level rather than patched locally.

When structure problems pointed to a data-management problem

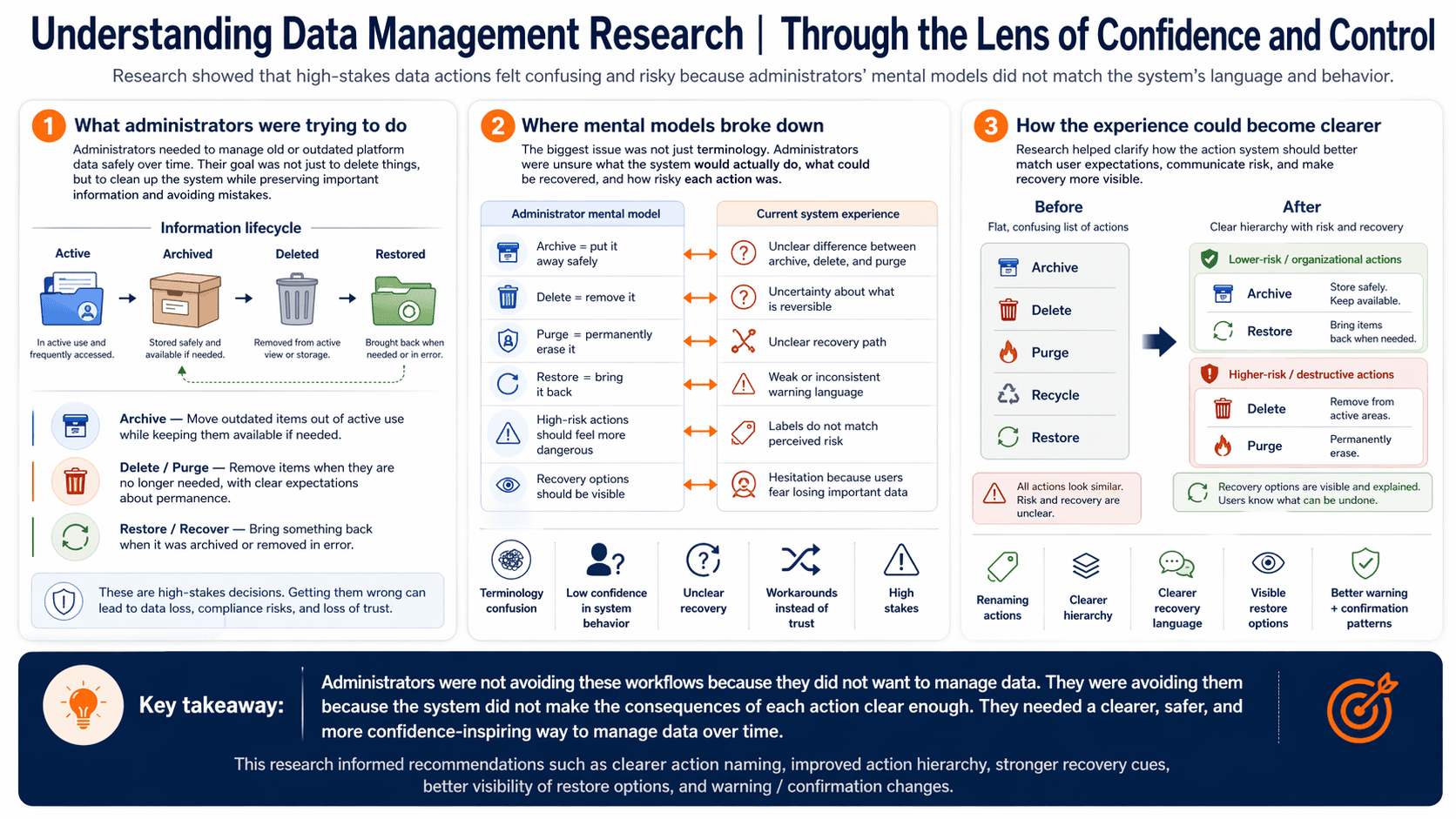

One thing that kept surfacing in the platform-structure work was that administrators were not using archive, delete, and related actions as intended. That mattered because these were not small housekeeping tasks. Old structural objects accumulated over time, and uncertainty about what actions meant made the system harder to trust and harder to maintain.

That led me into a follow-on project focused more directly on data management. I started with interviews to understand administrators’ mental models for archive, recycle, delete, and purge, and paired that with a heuristic evaluation of the current data-purge tool, which was barely being used at all. The pattern was clear. Administrators were hesitant to act because the language and system behavior did not match their expectations. In some cases, they created naming conventions or workaround structures instead of relying on the product’s built-in actions. The issue was not just terminology. It was confidence.

Research goal: Understand how administrators interpret archive, recycle, delete, and purge actions — and where the product’s language and behavior break their mental model.

Why this was the next step: The org-unit work repeatedly surfaced hesitation and workaround behavior around cleanup actions, suggesting that the issue was broader than one tool.

Methods: Interviews, mental-model exploration, heuristic evaluation, concept evaluation, and workflow testing.

Outcome: This work made the trust problem more concrete. It gave the team a clear explanation for why built-in actions were underused and turned that insight into both long-term design direction and short-term roadmapable improvements.

What it led to: It reinforced a larger pattern across the admin experience: when the product felt unclear in a high-stakes area, administrators built their own safeguards instead of relying on the system.

What changed next: A full redesign was not immediately feasible, so I worked with the product manager, designer, and dev manager to translate the research into changes that could fit the roadmap sooner. That included renaming actions, clarifying the hierarchy between archive/delete/purge, improving recovery language, making restore options more visible, and refining warning and confirmation patterns. The goal was not to wait for a perfect future state. It was to use research to reduce risk and confusion in the experience as early as possible.

Permissions and administration at scale

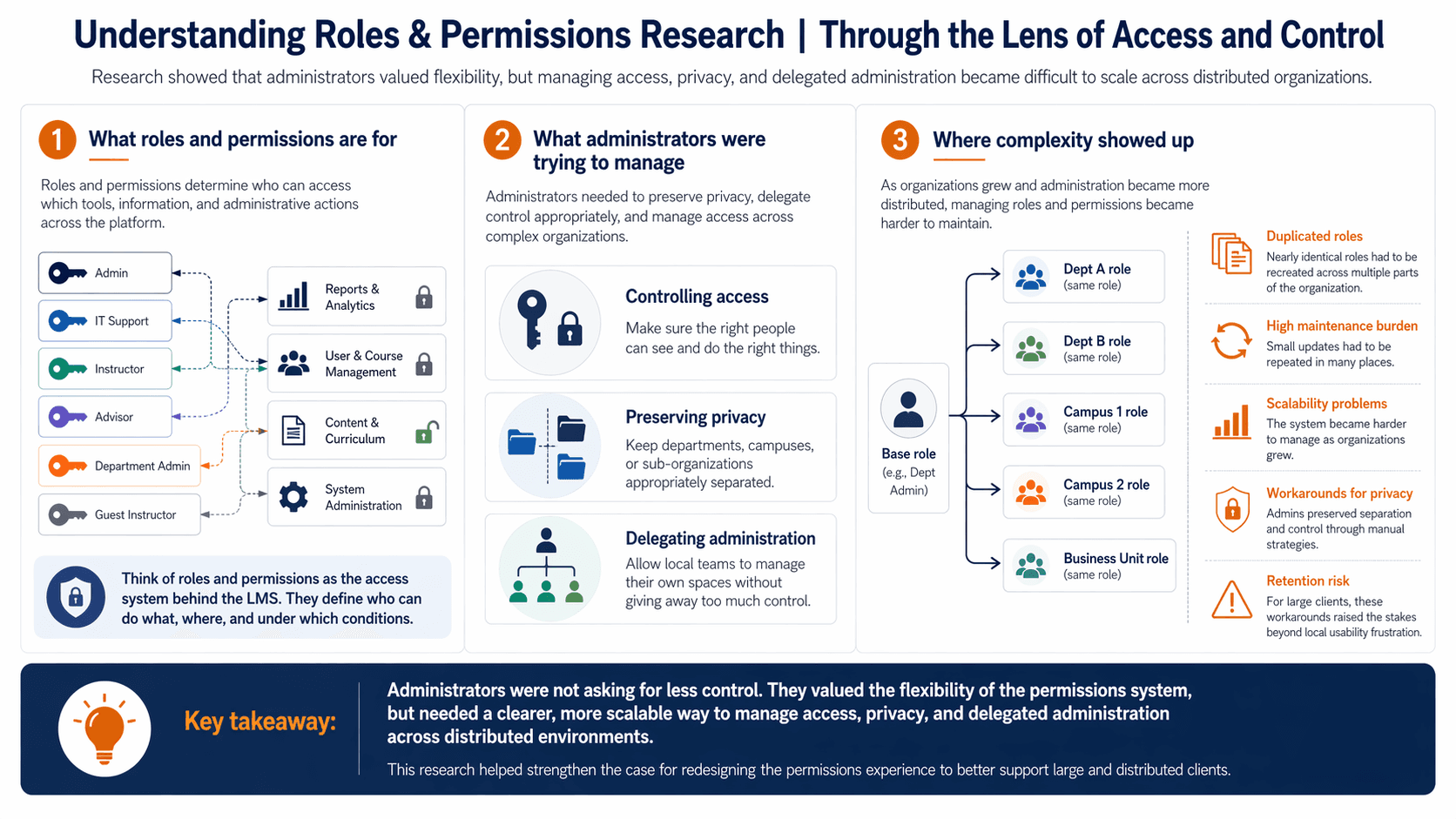

While the platform-structure work was surfacing one set of issues, the broader administrator research was also pointing to another major friction point: roles and permissions. These workflows were both important and difficult, especially for large organizations managing multiple departments, campuses, or sub-organizations. At the same time, we were hearing directly from larger clients who wanted better support for distributed instances of Brightspace.

That made this a clear next step. I triangulated client signals with broader admin research and then went deeper through interviews and workflow research to understand how administrators were managing access, privacy, and delegated control at scale. What I found was that administrators valued the granularity of the permissions system, but relied on brittle workarounds to preserve privacy and separation. In practice, that meant duplicating nearly identical roles across different parts of the organization. The flexibility was valuable; the maintenance burden was not. More importantly, multiple large clients made it clear that these workarounds made it harder to scale their organizations, which raised the stakes from a local usability problem to a long-term retention risk.

Research goal: Understand how administrators manage access, privacy, and delegated control across distributed environments.

Why this was the next step: Permissions had already surfaced as high-importance and high-difficulty in the broader admin research, and large clients were actively asking for better support in distributed environments.

Methods: Client/stakeholder triangulation, interviews, and workflow research.

Outcome: This work reframed permissions as more than a usability issue. It showed that users were preserving privacy and control through duplicated roles and manual workarounds, creating a maintenance burden that would only grow with scale.

What it led to: It strengthened the larger program insight that administrators were not asking for less flexibility — they needed safer, clearer ways to manage necessary complexity.

What changed next: This work helped move a permissions redesign onto the roadmap, with a focus on simplifying the tool overall and making it easier for large and distributed clients to manage access at scale. It also strengthened trust with key clients by showing that we were not treating their needs as edge cases, but as important signals about the future of the product.

Reflection

What the program taught me

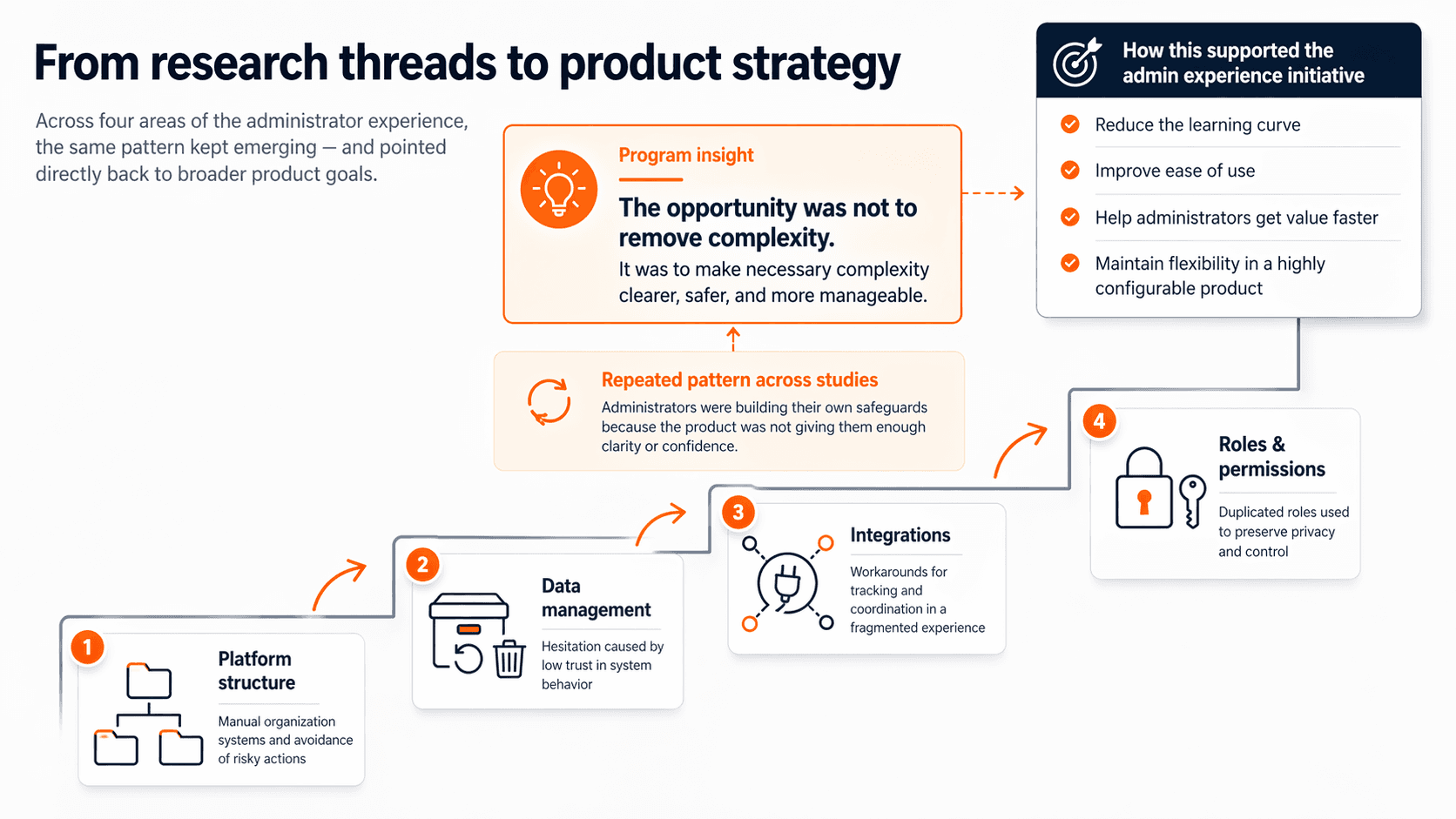

Over time, these stopped feeling like separate workflow problems. The same pattern kept appearing in different parts of the admin experience: administrators were not asking for less control or less flexibility. They were asking for clearer, safer ways to work within a genuinely complex system.

In structure workflows, they created manual organization systems and avoided risky actions. In data-management workflows, they hesitated because they did not trust what the system would do. In integrations, they built their own tracking and coordination systems to compensate for a fragmented experience. In permissions workflows, they duplicated roles to preserve privacy and control. Across all of them, the same thing was happening: people were building their own safeguards because the product was not giving them enough clarity or confidence.

The opportunity was not to flatten the system. It was to make necessary complexity more manageable.

What changed because of the work

This program helped shift the team’s framing. Instead of treating admin problems as isolated usability issues, it showed that several of the most painful workflows were connected by a larger challenge: helping administrators work safely and confidently in a highly configurable system.

It also directly supported the goals of the broader D2L initiative. The work reduced uncertainty about where to start, clarified which workflows were most important to improve, and gave teams a connected understanding of the admin experience rather than a set of disconnected feature complaints. It informed redesign work in foundational structure tools, helped reimagine the integrations experience through a new unified tool, surfaced smaller roadmapable improvements in data management when a full redesign was not feasible, and strengthened the case for permissions work that would better support large distributed clients. Just as importantly, it helped build empathy across product, design, and development teams by making administrator needs, workarounds, and constraints much more visible. The overall result was a more strategic approach to improving the admin experience: one that supported ease of use and time to value without sacrificing the configurability customers needed.

What I’d improve

If I had the opportunity to continue this work, I would connect the story more directly to downstream product and release outcomes over time. I would want to measure whether the administrator experience actually improved using behavioral analytics and follow-up research — for example, whether use of tools like data purge increased, whether ease-of-use ratings improved when the survey was rerun, and whether the new features introduced in the redesigned experiences were actually being adopted.

I would also want to turn what this program taught us about administrators into reusable team assets, such as administrator personas or shared frameworks. One of the strengths of this work was that it created a much richer understanding of the admin experience than the organization had before; formalizing that understanding would make it easier for future teams to build on the work rather than rediscover the same patterns from scratch.